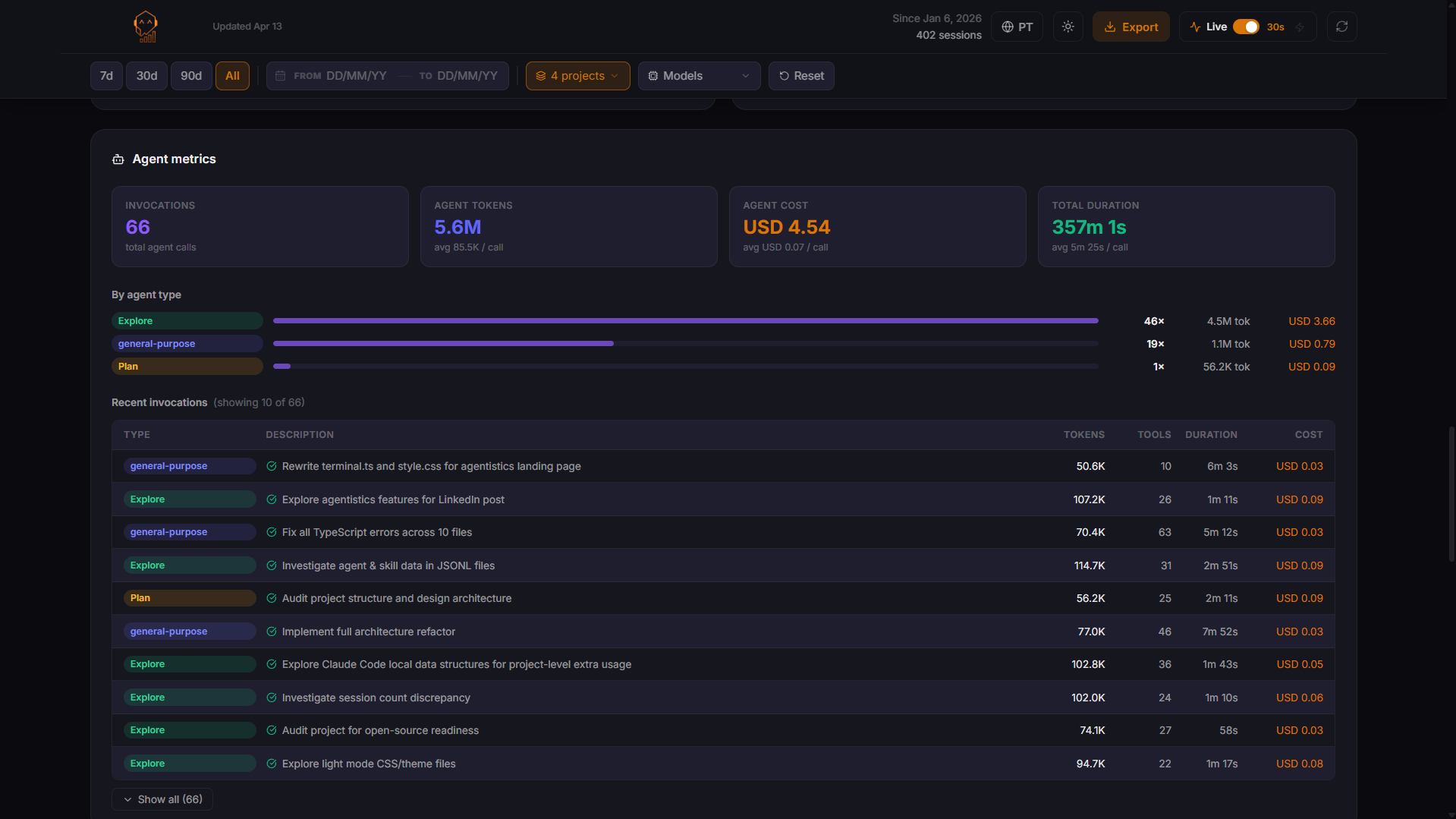

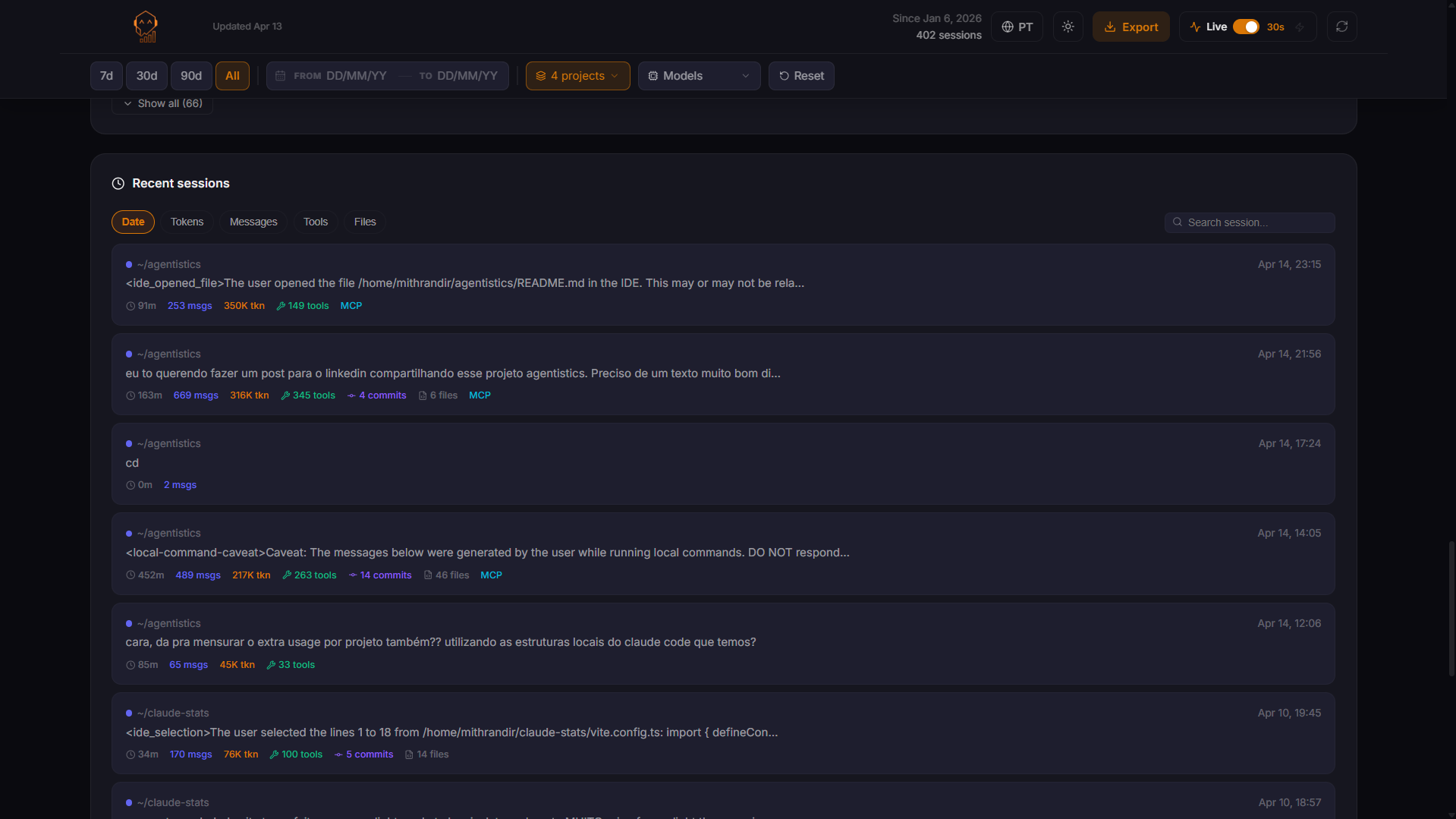

Every metric.

One interface.

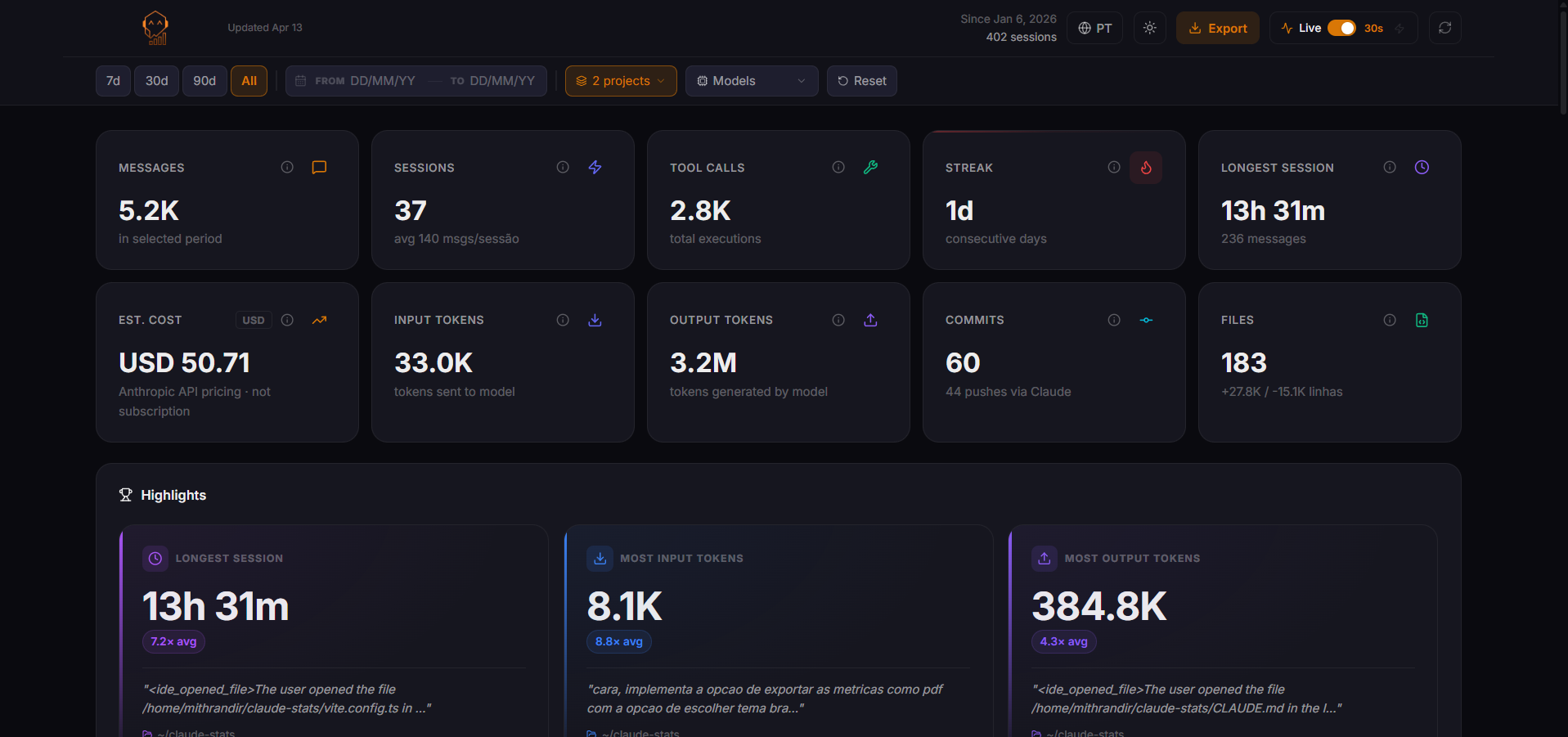

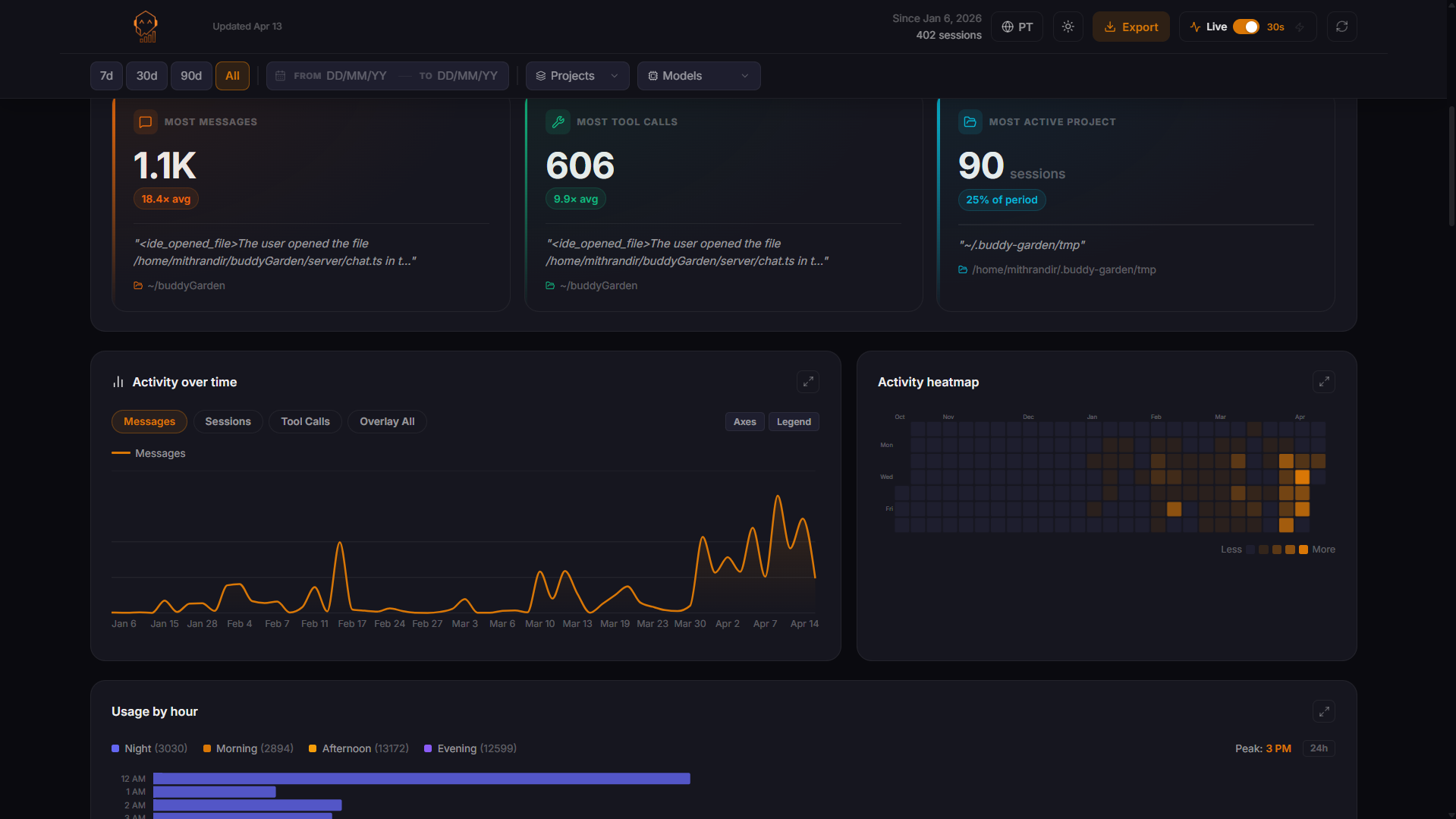

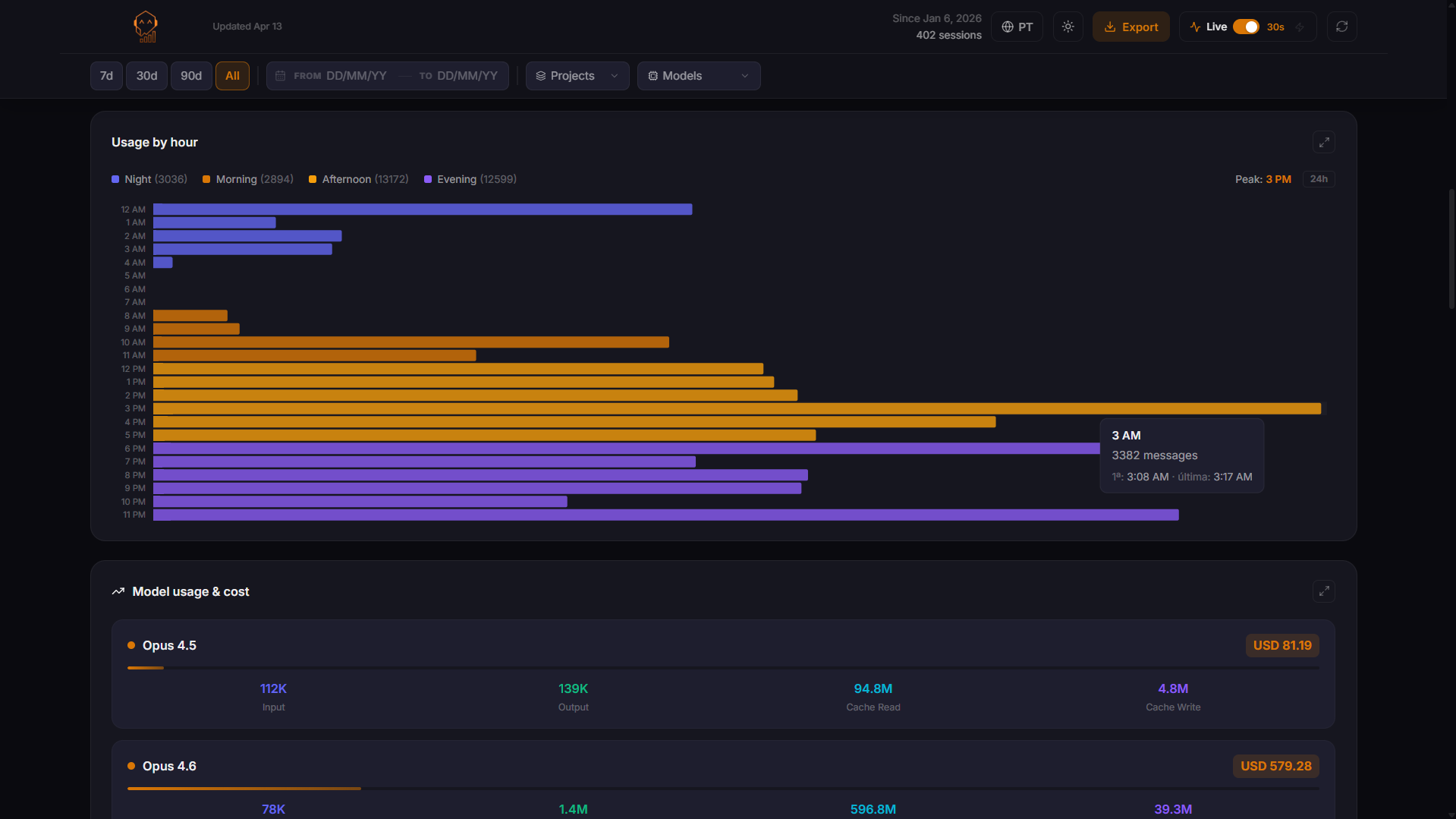

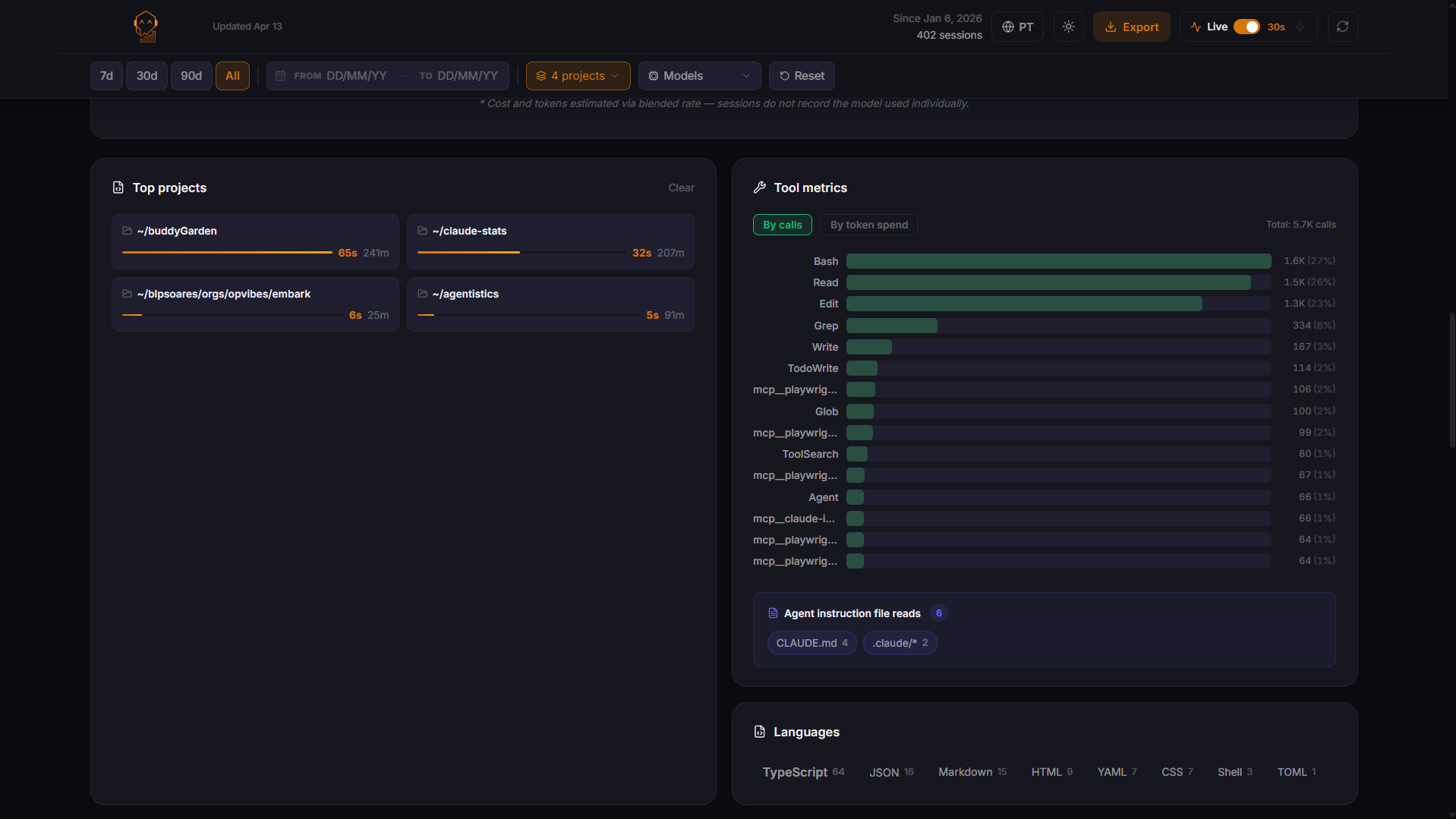

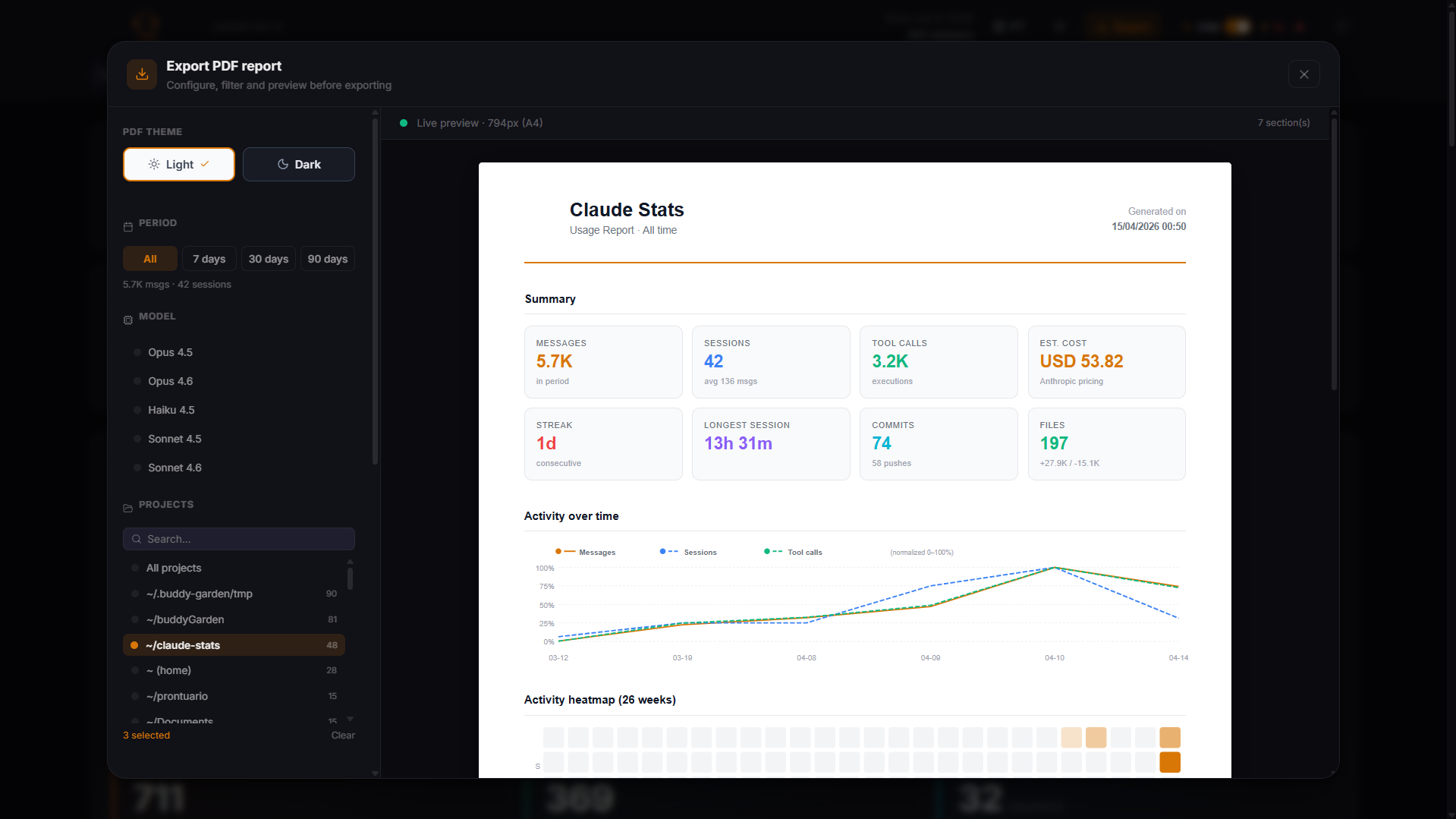

Real screenshots from a live agentistics instance.

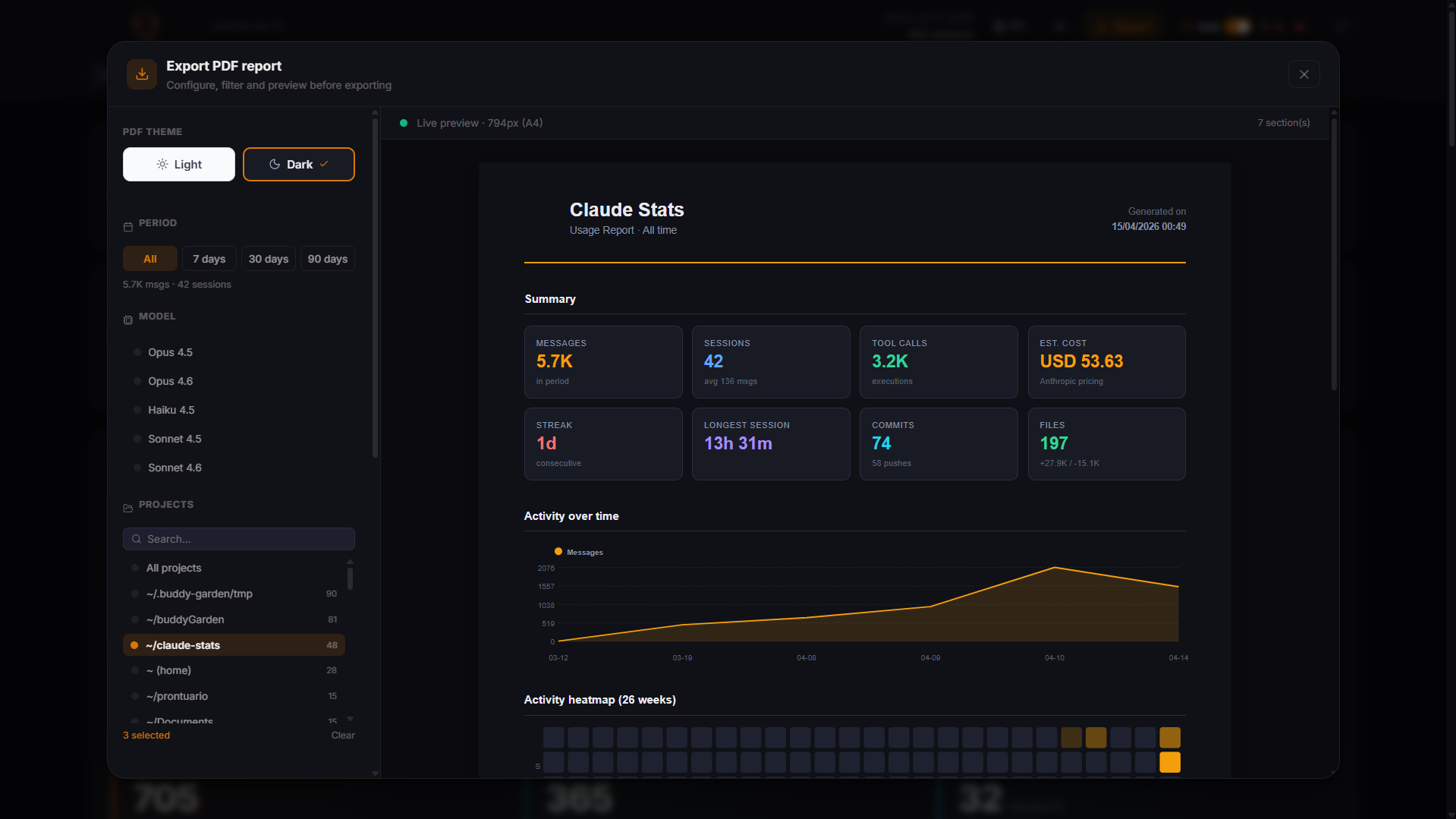

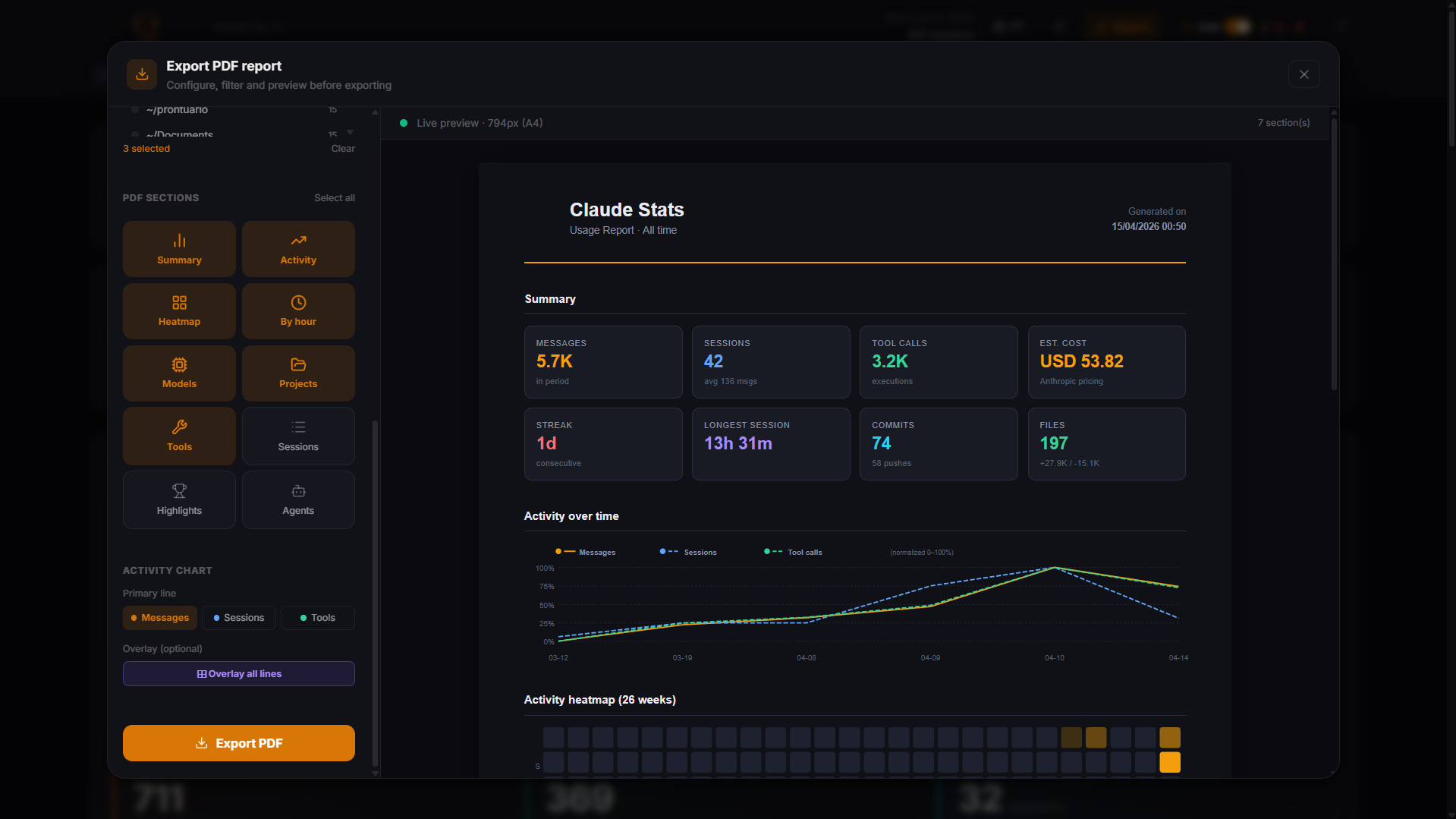

PDF Export

One-click report — dark & light themes, shareable anywhere

Ready to see your own data?